So, how do you prevent your face from being changed?

Editor’s note: This article comes from WeChat public account “Heart of the Machine” (ID: almosthuman2014) , participating in: Si, Du Wei, egg sauce; released with permission.

Changing face videos is a big consequence of DL abuse. As long as you have your photos online, you may be changed faces to other backgrounds or videos. However, with such an open source attack model, uploaded photos are no longer a problem, and deepfake cannot directly use it for face replacement.

Recently, researchers from Boston University introduced a new study of deepfake in a paper. Looking at the title and effect of the paper, it seems that as long as we input our pictures, the deepfake face-changing model can no longer use our pictures as materials to make small video.

It looks very good, just add some noise that is invisible to the human eye, and changing the face model can no longer generate the correct face. Isn’t this thinking exactly the way of adversarial attacks? The previous attack model would deceive the identification model by “forging real images”. Now, the noise generated by the attack model will arm the face image, thereby deceiving deepfake, so that deepfake cannot generate a face-changing model that deceives humans.

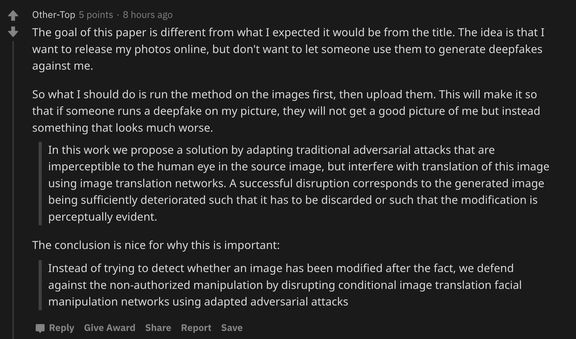

This Boston University study was released a short time ago, and it has been hotly debated by many researchers. It has also been discussed a lot on Reddit. After seeing this paper, and the researchers have released a GitHub project, it is likely that we will think “Is it possible to publish our photos online and then use them after deepfake?”

But things are certainly not as simple as we think. Reddit user Other-Top said, “According to this paper, I need to use this method to process the photos first, and then upload the photos. Others use this to change their face. It will go wrong. “

That is to say, our photos and photos of stars must be passed through the attack model before they can be uploaded to the Internet. Are such photos safe?

It sounds more troublesome, but we can still look at the research content of this paper first, maybe we can think of a better way. In this paper, the researchers use the adversarial attack that the human eye cannot perceive in the source image, and use the anti-noise interference to generate the image.

As a result of this destruction, the generated image will be sufficiently degraded, either making the image unusable or making changes to the image noticeable. In other words, invisible noise causes deepfake to produce videos that are obviously fake.